When AI starts to pay off in field service

This article is also available in German

AI pilots are everywhere in field service. Automatic ticket classification. Smarter routing suggestions. Predictive dashboards. The results often look promising during the pilot phase. Accuracy improves, insights become clearer, and the technology shows real potential.

But when the pilot ends and daily operations resume, very little actually changes.

Dispatch still reviews every ticket manually.

Schedulers still override assignments “just in case.”

Reports still get rebuilt outside the system.

The model works, but the workflow around it stays the same. That’s where many AI initiatives stall.

AI only starts to pay off when it becomes part of execution — not when it sits beside it.

Why AI pilots in field service stall

Most pilots are designed to prove that the technology works.

But field service doesn’t reward technical accuracy. It rewards operational stability.

Three things usually hold AI back.

1) AI doesn’t replace operational decisions

If dispatch still reviews 100% of tickets, triage automation hasn’t reduced workload — it has simply added another step.

If automated scheduling suggests assignments but coordinators override them constantly, trust never forms. The system remains advisory.

AI creates value when it absorbs routine decisions, not when it merely comments on them.

2) Success is measured in model accuracy instead of operations

Accuracy percentage is not an operational KPI.

Better questions are:

- Did manual triage time drop?

- Did first-time fix improve?

- Did SLA volatility decrease?

- Can the same team handle higher ticket volume?

If those numbers don’t move, the pilot hasn’t changed the system.

3) Inconsistent workflows limit automation

AI amplifies structure. It also amplifies confusion.

If ticket categories overlap, automation spreads inconsistency faster.

If SLA rules change depending on who is on shift, scheduling logic becomes unstable.

The issue isn’t that AI is immature. It’s that execution isn’t standardized.

AI and automation work together in field service

This is where many initiatives lose clarity.

In field service, automation is the execution layer. It moves work from ticket to technician using defined logic such as skills, availability, SLAs, and routing rules.

AI strengthens that layer. It improves how information is interpreted and how decisions are made within those workflows.

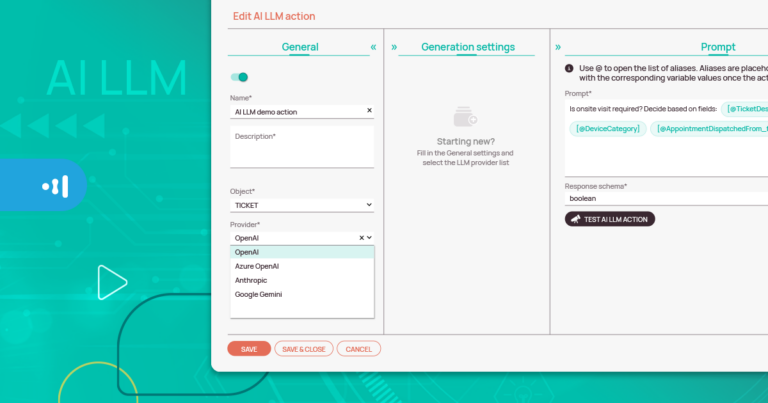

For example, AI can help categorize incoming tickets, detect patterns in service requests, or support prioritization before automated dispatch takes over.

When AI operates outside the workflow — as a dashboard, report, or side tool — it mostly generates insights.

When it strengthens automation inside the workflow, it changes how work actually moves through the system.

That’s the difference between insight and operational impact.low the job lifecycle, it will struggle to keep pace with real-time service demand.

Measuring value before scaling

AI doesn’t need a year to prove its value.

When it’s embedded directly into service workflows, the impact becomes visible quickly.

For example:

If automated ticket triage reduces daily manual review from 120 tickets to 40 exception cases, dispatch immediately feels the difference.

If scheduling logic reduces travel time and eliminates constant reshuffling, coordination becomes far more predictable.

If real-time dashboards replace weekly spreadsheet consolidation, managers regain time that was previously spent compiling reports.

These aren’t theoretical ROI projections. They are operational shifts.

AI starts to pay off when coordination pressure drops.

From AI pilot to operational practice

The transition happens when automation carries more of the coordination burden.

In field service, that usually becomes visible in three areas first.

Ticket handling

Structured intake, automatic categorization, and clear routing rules reduce triage queues. Instead of reviewing every request manually, teams focus on exceptions rather than routine sorting.

Scheduling

Assignments are driven by skills, availability, SLA priority, geography, and part readiness — without constant manual balancing.

When dispatch only intervenes in edge cases, productivity increases without adding headcount.

Reporting

Operational dashboards reflect real-time execution. There is no parallel tracking and no end-of-week reconciliation.

This is where embedded automation inside your field service management software turns AI from experiment into operational leverage.

Not through a long transformation program, but through visible daily change.

Conclusion

Most AI pilots in field service don’t fail technically.

They stall because they remain observational instead of operational.

Real ROI appears when routine coordination no longer depends on constant manual decisions. Dispatch workload decreases, SLA performance stabilizes, and execution becomes more predictable even as service volumes grow.

That’s when AI moves from experiment to everyday practice.

If you’d like to see how automation and AI work together in practice, book a personalized demo and discover how field service operations can run with less coordination effort and greater stability.

Knowledge tip

If your AI initiative hasn’t reduced manual triage, scheduling overrides, or SLA volatility within months, it’s likely still in pilot mode. Real value appears when AI strengthens automation inside your field service management software – not when it operates as a separate experiment.

FAQ

Why do AI projects in field service stall after the pilot stage?

Many AI initiatives improve analysis but don’t replace manual decisions inside dispatch and scheduling workflows. Without integration into daily service execution, AI remains an experiment instead of delivering real operational impact.

What is the first sign that AI is delivering ROI in field service?

A clear reduction in manual coordination. Fewer ticket reviews, fewer scheduling overrides, and more stable SLA performance usually signal that AI is supporting execution rather than just providing insights.